|

This is because we need to host the image in a local or public registry, else the cronJob cannot find the image. However, if we see the POD logs we see that the image cannot be found kubectl logs connect-1603564560-q6d4t Error from server (BadRequest): container "connect" in pod "connect-1603564560-q6d4t" is waiting to start: image can't be pulled Let’s validate the pods, looks like it is being run kubectl get po NAME READY STATUS RESTARTS AGE connect-1603564500-6dk2m 0/1 ImagePullBackOff 0 2m18s connect-1603564560-q6d4t 0/1 ImagePullBackOff 0 78s connect-1603564620-dtrgf 0/1 ImagePullBackOff 0 18s postgres-postgresql-0 1/1 Running 0 29m Let’s validate the cronjob kubectl get cronjobs NAME SCHEDULE SUSPEND ACTIVE LAST SCHEDULE AGE connect */1 * * * * False 1 13s 72s Let’s apply the same using kubectl kubectl apply -f c.yml cronjob.batch/connect created apiVersion: batch/v1beta1 kind: CronJob metadata: name: connect spec: schedule: "*/1 * * * *" jobTemplate: spec: template: spec: containers: - name: connect image: postgres_image restartPolicy: OnFailure Kubernetes documentation has details on the same. Let’s create a cronjob that tries to run the script we just wrote every minute (as per our requirements) in the Kubernetes cluster using a YAML file. Sending build context to Docker daemon 6.144kB Step 1/6 : FROM python:3.8-slim -> 41dcfe21e8fd Step 2/6 : WORKDIR /app -> Using cache -> 00a8320e3c52 Step 3/6 : COPY requirements.txt /app -> Using cache -> e67a21cc9bb8 Step 4/6 : COPY connect_db.py /app -> Using cache -> 38a6058e0000 Step 5/6 : RUN pip install -r requirements.txt -> Using cache -> 07495bf00820 Step 6/6 : CMD python connect_db.py -> Using cache -> 97b09c86daa9 Successfully built 97b09c86daa9 Successfully tagged postgres_image:latestĬ. Requirements.txt sqlalchemy=1.3.16 psycopg2-binaryĭockerfile FROM python:3.8-slim WORKDIR /app COPY requirements.txt /app COPY connect_db.py /app RUN pip install -r requirements.txt CMD python connect_db.pyĬonnect_db.py import sqlalchemy as db engine = connection = nnect() metadata = db.MetaData(bind=connection, reflect=True) print(metadata)ī.

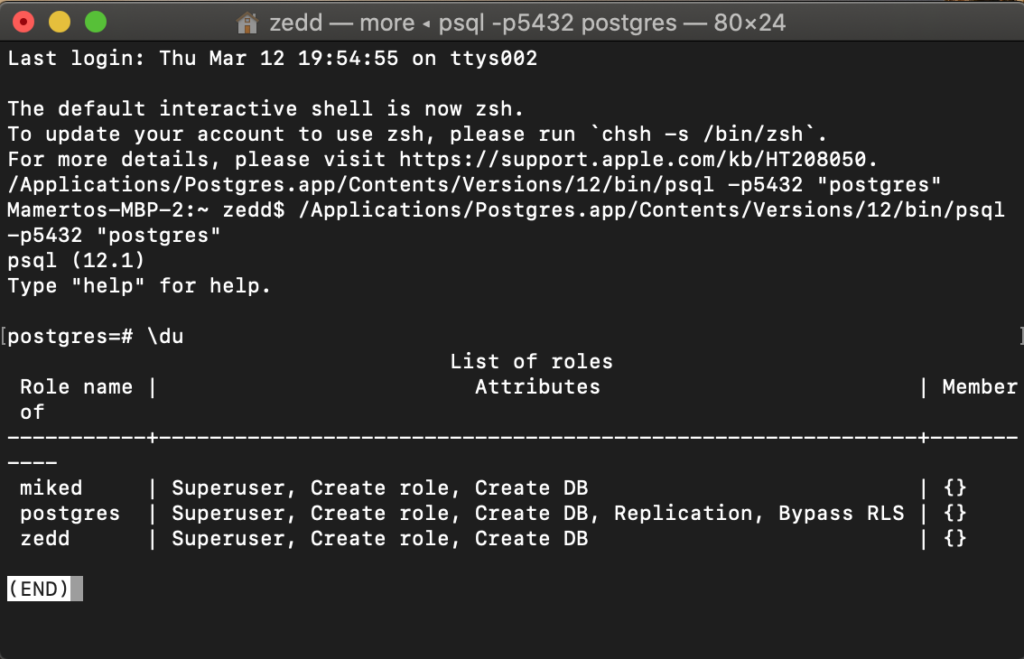

Let’s start by creating an image to run this script. We can add this as part of a Docker image and a container running in a POD. How do we run this script as part of the Kubernetes cluster?Ī. Python script to connect to the DB import sqlalchemy as db engine = connection = nnect() metadata = db.MetaData(bind=connection, reflect=True) print(metadata)Ģ. Username => postgres Password => $POSTGRES_PASSWORD variable as found previosuly DB name => postgres Let’s use a simple python script connect_db.pyto connect to the DB. Looks like we can insert and query from the DB postgres=# CREATE TABLE phonebook(phone VARCHAR(32), firstname VARCHAR(32), lastname VARCHAR(32), address VARCHAR(64)) CREATE TABLE postgres=# INSERT INTO phonebook(phone, firstname, lastname, address) VALUES('+1 1', 'John', 'Doe', 'North America') INSERT 0 1 postgres=# SELECT * FROM phonebook ORDER BY lastname phone | firstname | lastname | address -+-+-+- +1 1 | John | Doe | North America (1 row) Add a cronjob which can connect to the DB every minute Validate if helm is installed correctly Let's try K8 : helm version version.BuildInfo" | base64 -decode) Let's try K8 : kubectl run postgres-postgresql-client -rm -tty -i -restart='Never' -namespace default -image docker.io/bitnami/postgresql:11.9.0-debian-10-r48 -env="PGPASSWORD=$POSTGRES_PASSWORD" -command - psql -host postgres-postgresql -U postgres -d postgres -p 5432 Let's try K8 : minikube status minikube type: Control Plane host: Running kubelet: Running apiserver: Running kubeconfig: ConfiguredĢ.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed